The Perception Engineering research group focuses on fundamental issues in virtual reality and robotics. We consider virtual reality broadly as a category that leverages the latest technologies and products in virtual reality (VR), augmented reality (AR), mixed reality (MR), and telepresence. We consider core robotics problems such as sensing, sensor fusion, planning, learning, and control.

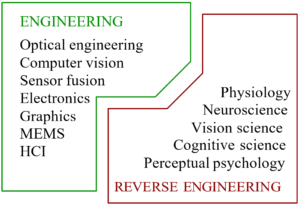

We view VR as a problem of perception engineering, which requires the design, development, and delivery of a perceptual illusion through artificial stimulation of the human senses. Each human sense is capable of such illusions; in the case of vision, we are familiar with many optical illusions. Because VR directly impacts the human body and even disrupts its ordinary functions, it is crucial to understand human physiology, neuroscience, and perception and how they respond to VR technology. We strive to determine perception-based and physiology-based criteria that capture important qualities such as task effectiveness, comfort, and presence. These criteria are used to guide the engineering specifications for VR systems. Typical challenges are viewpoint movement, display artifacts, rendering methods, interaction mechanisms, distributed computation, and limitations of wireless communication.

Perception engineering involves the forward engineering of VR systems with the tight integration of low-level human considerations, which are essentially obtained via reverse engineering (we did not engineer ourselves). Based on current academic fields, this requires a highly interdisciplinary approach; however, one day perception engineers might emerge, who are specifically trained in methodologies based on the science of perception. This would follow a similar path as existing engineering fields. Civil, mechanical, and electrical engineering derive from physics. Chemical and biological engineering derive from chemistry and biology, respectively. Likewise, perception engineering should derive from perceptual psychology and related fields of physiology, medicine, and neuroscience, while also building upon existing engineering principles.

Topical

Participate in our research studies

People

Steven M. LaValle

Professor

Email | Call

Scholar

Timo Ojala

Professor

Email | Call

Scholar

Paula Alavesa

University Lecturer

Email | Call

Scholar

Anna LaValle

University Lecturer

Email | Call

Matti Pouke

Senior Research Fellow, Docent

Email | Call

Scholar

Başak Sakçak

Senior Research Fellow, Docent

Email | Call

Scholar

Elmeri Uotila

University Teacher

Email | Call

LinkedIn

GitHub

Vadim Weinstein

Senior Research Fellow (part-time)

Email | Call

Scholar

Evan Center

Postdoctoral researcher

Email | Call

Nicoletta Prencipe

Postdoctoral researcher

Email | Call

LinkedIn | Scholar

Kalle Timperi

Postdoctoral researcher

Email | Call

LinkedIn | Scholar

Aref Amiri

Doctoral researcher

Email | Call

Juho Kalliokoski

Doctoral researcher

Email | Call

LinkedIn

Mikko Korkiakoski

Doctoral researcher

Email | Call

LinkedIn

Eetu Laukka

Doctoral researcher

Email | Call

LinkedIn

Katherine Mimnaugh

Doctoral researcher

Email | Call

Scholar

Iresh Bandara

Research Assistant

Email | Call

LinkedIn | GitHub

Khalil Chakal

Research Assistant

Email | Call

LinkedIn

Filip Georgiev

Research Assistant

Email | Call

LinkedIn | GitHub

Lorenzo Medici

Research Assistant

Email | Call

LinkedIn | GitHub

Alexis Chambers

Planning Officer

Email | Call

Alex LaValle

Research Programmer

Email | Call

Current Projects

CHiMP – Challenges Hidden in Motion Primitives (Academy of Finland)

ILLUSIVE – Foundations of Perception Engineering (European Research Council & University of Oulu)

ViRTIA – Virtual Reality-Based Telepresence through Improved Autonomy (University of Oulu / Infotech)

Past Projects

COMBAT (Academy of Finland / Strategic Research Council)

HUMOR – HUMan Optimized xR (Business Finland and industry)

HUMORcc (Business Finland)

OpenARcc – Next generation Open Source AR glasses (Business Finland)

PERCEPT – Human-Perception Based Planning and Filtering (Academy of Finland)

PIXIE – Plausibility Paradoxes in Scaled-Down Virtual Reality (Academy of Finland)

TELETAPPIcc (Business Finland)

Selected Publications

Steven M. LaValle. Virtual Reality. Cambridge University Press.

2024

Berthier M, Prencipe N & Provenzi E (2024) Split-quaternions for perceptual white balance. IEEE Signal Processing Magazine, in press.

LaValle SM, Center EG, Ojala T, Pouke M, Prencipe N, Sakçak B, Suomalainen M, Timperi KG & Weinstein V (2024) From virtual reality to the emerging discipline of perception engineering. Annual Review of Control, Robotics, and Autonomous Systems 7. https://doi.org/10.1146/annurev-control-062323-102456

2023

Bilevich MM, LaValle SM & Halperin D (2023) Sensor localization by few distance measurements via the intersection of implicit manifolds. Proc. 2023 IEEE International Conference on Robotics and Automation (ICRA 2023), London, UK, 1912-1918. https://doi.org/10.1109/ICRA48891.2023.10160553

Kundu S, Bahoo Y, Çağırıcı O & LaValle SM (2023) Arithmetic billiard paths revisited: Escaping from a rectangular room. Proc. 27th International Conference on Methods and Models in Automation and Robotics (MMAR 2023), Międzyzdroje, Poland, 478-483. https://doi.org/10.1109/MMAR58394.2023.10242582

LaValle AJ, Sakçak B & LaValle SM (2023) Bang-bang boosting of RRTs. Proc. 2023 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2023), Detroit, MI, USA, 2869-2876. https://doi.org/10.1109/IROS55552.2023.10341760

Mimnaugh KJ, Center EG, Suomalainen M, Becerra I, Lozano E, Murrieta-Cid R, Ojala T, LaValle SM & Federmeier KD (2023) Virtual reality sickness reduces attention during immersive experiences. IEEE Transactions on Visualization and Computer Graphics 29(11):4394-4404. https://doi.org/10.1109/TVCG.2023.3320222

Sakçak B, Timperi KG & LaValle SM (2023) A mathematical characterization of minimally sufficient robot brains. The International Journal of Robotics Research. https://doi.org/10.1177/02783649231198898

Sakçak B, Weinstein V & LaValle SM (2023) The limits of learning and planning: Minimal sufficient information transition systems. In: LaValle SM, O’Kane JM, Otte M, Sadigh D & Tokekar P (eds) Algorithmic Foundations of Robotics XV (WAFR 2022). Springer Proceedings in Advanced Robotics, vol 25. Springer, Cham. https://doi.org/10.1007/978-3-031-21090-7_16

Ylipulli J, Pouke M, Ehrenberg N & Keinonen T (2023) Public libraries as a partner in digital innovation project: Designing a virtual reality experience to support digital literacy. Future Generation Computer Systems 149:594-605. https://doi.org/10.1016/j.future.2023.08.001

2022

Arora N, Suomalainen M, Pouke M, Center EG, Mimnaugh KJ, Chambers AP, Pouke S & LaValle SM (2022) Augmenting immersive telepresence experience with a virtual body. IEEE Transactions on Visualization and Computer Graphics 28(5):2135-2145. https://doi.org/10.1109/TVCG.2022.3150473.

Baraldo A, Bascetta L, Caprotti F, Chourasiya S, Ferretti G, Ponti A & Sakçak B (2022) Automatic computation of bending sequences for wire bending machines. International Journal of Computer Integrated Manufacturing. https://doi.org/10.1080/0951192X.2022.2043563

Çağırıcı O, Bahoo Y & LaValle SM (2022) Bouncing robots in rectilinear polygons. Proc. 26th International Conference on Methods and Models in Automation and Robotics (MMAR 2022), Międzyzdroje, Poland, 193-198. https://doi.org/10.1109/MMAR55195.2022.9874340

Center EG, Gephart AM, Yang P-L & Beck DM (2022) Typical viewpoints of objects are better detected than atypical ones. Journal of Vision 22(12):1,1–14. https://doi.org/10.1167/jov.22.12.1

Center EG, Mimnaugh KJ, Häkkinen J & LaValle SM (2022) Human perception engineering. In: Alcañiz M, Sacco M & Tromp JG (eds.) Roadmapping Extended Reality: Fundamentals and Applications, Wiley, 157-181.

Halkola J, Suomalainen M, Sakçak B, Mimnaugh KJ, Kalliokoski J, Chambers AP, Ojala T & LaValle SM (2022) Leaning-based control of an immersive-telepresence robot. Proc. 21st IEEE International Symposium on Mixed and Augmented Reality (ISMAR 2022), Singapore, 576-583. https://doi.org/10.1109/ISMAR55827.2022.00074

Kalliokoski J, Sakçak B, Suomalainen M, Mimnaugh KJ, Chambers AP, Ojala T & LaValle SM (2022) HI-DWA: Human-influenced dynamic window approach for shared control of a telepresence robot. Proc. 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2022), Kyoto, Japan, 7696-7703. https://doi.org/10.1109/IROS47612.2022.9981367

Pakanen M, Alavesa P, van Berkel N, Koskela T & Ojala T (2022) “Nice to see you virtually”: Thoughtful design and evaluation of virtual avatar of the other user in AR and VR based telexistence systems. Entertainment Computing 40:100457. https://doi.org/10.1016/j.entcom.2021.100457

Pouke M, Center EG, Chambers AP, Pouke S, Ojala T & LaValle SM (2022) The body scaling effect and its impact on physics plausibility. Frontiers in Virtual Reality 3:869603. https://doi.org/10.3389/frvir.2022.869603

Pouke M, Ylipulli J, Uotila E, Sitomaniemi A-K, Pouke S & Ojala T (2022) A qualitative case study on deconstructing presence for young adults and older adults. PRESENCE: Virtual and Augmented Reality 31:257-281. https://doi.org/10.1162/pres_a_00397

Sakçak B & Bascetta L (2022) Safe motion planning for a mobile robot navigating in environments shared with humans. Proc. 2022 IEEE 18th International Conference on Automation Science and Engineering (CASE 2022), Mexico City, Mexico, 2074-2079. https://doi.org/10.1109/CASE49997.2022.9926538

Suomalainen M, Sakcak B, Widagdo A, Kalliokoski J, Mimnaugh KJ, Chambers AP, Ojala T & LaValle SM (2022) Unwinding rotations improves user comfort with immersive telepresence robots. Proc. 17th ACM/IEEE International Conference on Human-Robot Interaction (HRI 2022), Sapporo, Japan, 511-520. https://doi.org/10.1109/HRI53351.2022.9889388

Tiwari K, Sakçak B, Routray P, Manivannan M & LaValle SM (2022) Visibility-inspired models of touch sensors for navigation. Proc. 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2022), Kyoto, Japan, 13151-13158. https://doi.org/10.1109/IROS47612.2022.9981084

Weinstein V, Sakçak B & LaValle SM (2022) An enactivist-inspired mathematical model of cognition. Frontiers in Neurorobotics 16. https://doi.org/10.3389/fnbot.2022.846982

2021

Mimnaugh KJ, Suomalainen M, Becerra I, Lozano E, Murrieta-Cid R & LaValle SM (2021) Analysis of user preferences for robot motions in immersive telepresence. Proc. IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2021), Prague, Czech Republic, 4252-4259. https://doi.org/10.1109/IROS51168.2021.9636852

Nilles AQ, Ren Y, Becerra I & LaValle SM (2021) A visibility-based approach to computing nondeterministic bouncing strategies. The International Journal of Robotics Research 40(10-11):1196-1211. https://doi.org/10.1177%2F0278364921992788

Pouke M, Mimnaugh KJ, Chambers A, Ojala T & Lavalle SM (2021) The plausibility paradox for resized users in virtual environments. Frontiers in Virtual Reality 2:655744. https://doi.org/10.3389/frvir.2021.655744

Sakçak B & LaValle SM (2021) Complete path planning that simultaneously optimizes length and clearance. Proc. 2021 IEEE International Conference on Robotics and Automation (ICRA 2021), Xi’an, China, 10100-10106. https://doi.org/10.1109/ICRA48506.2021.9561784

Shukla R, Routray PK, Tiwari K, LaValle SM & Manivannan M (2021) Monofilament whisker-based mobile robot navigation. Proc. 2021 IEEE World Haptics Conference (WHC 2021), Montreal, Canada, 1150. https://doi.org/10.1109/WHC49131.2021.9517235

Suomalainen M, Abu-dakka FJ & Kyrki V (2021) Imitation learning-based framework for learning 6-D linear compliant motions. Autonomous Robots 45:389–405. https://doi.org/10.1007/s10514-021-09971-y

Suomalainen M, Mimnaugh KJ, Becerra I, Lozano E, Murrieta-Cid R & LaValle SM (2021) Comfort and sickness while virtually aboard an autonomous telepresence robot. In: Bourdot P, Alcañiz Raya M, Figueroa P, Interrante V, Kuhlen TW & Reiners D (editors) Virtual Reality and Mixed Reality (EuroXR 2021). Lecture Notes in Computer Science 13105:3-24. Springer, Cham. https://doi.org/10.1007/978-3-030-90739-6_1

2020

Alavesa P, Pakanen M, Ojala T, Pouke M, Kukka H, Samodelkin A, Voroshilov A & Abdellatif M (2020) Embedding virtual environments into the physical world: Memorability and co-presence in the context of pervasive location-based games. Multimedia Tools and Applications 79, 3285–3309. https://doi.org/10.1007/s11042-018-7077-z.

Becerra I, Suomalainen M, Lozano E, Mimnaugh KJ, Murrieta-Cid R & LaValle SM (2020) Human perception-optimized planning for comfortable VR-based telepresence. IEEE Robotics and Automation Letters 5(4):6489-6496. https://doi.org/10.1109/LRA.2020.3015191

Browning MHEM, Mimnaugh KJ, van Riper CJ, Laurent HK & LaValle SM (2020) Can simulated nature support health? Comparing short, single-doses of 360-degree nature videos in virtual reality with the outdoors. Frontiers in Psychology 10:2667. https://dx.doi.org/10.3389%2Ffpsyg.2019.02667

Nilles AQ, Pervan A, Berrueta TA, Murphey TD & LaValle SM (2020) Requirements of collision-based micromanipulation. Proc. Algorithmic Foundations of Robotics XIV (WAFR 2020), 210-226. https://doi.org/10.1007/978-3-030-66723-8_13

Pakanen M, Alavesa P, Arhippainen L & Ojala T (2020) Stepping out of the classroom – Anticipated user experiences of web-based mirror world like virtual campus. International Journal of Virtual and Personal Learning Environments 10(1):1-23. https://doi.org/10.4018/IJVPLE.2020010101.

Pouke M, Mimnaugh K, Ojala T & LaValle SM (2020) The plausibility paradox for scaled-down users in virtual environments. Proc. 2020 IEEE Conference on Virtual Reality and 3D User Interfaces (IEEE VR 2020), Atlanta, GA, USA, 913-921. https://doi.org/10.1109/VR46266.2020.00014

Suomalainen M, Nilles AQ & LaValle SM (2020) Virtual reality for robots. Proc. 2020 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2020), Las Vegas, NV, USA, 11458-11465. https://doi.org/10.1109/IROS45743.2020.9341344

Ylipulli J, Pouke M, Luusua A & Ojala T (2020) From hybrid spaces to “imagination cities”: A speculative approach to virtual reality. In: Willis KS & Aurigi A (eds.) The Routledge Companion to Smart Cities, Routledge, London. https://www.routledge.com/The-Routledge-Companion-to-Smart-Cities/Willis-Aurigi/p/book/9781138036673

Media

Virtual Reality – Steven LaValle

Nyt tuli kiire metaversumiin (Tekniikan Maailma 5/2022) (in Finnish)

Tiedon valossa: Virtuaalitodellisuuden uudet maailmat (in Finnish)